"Can AI do this?"

For a while, this has been the question I kept asking myself:

Can it summarize this reading?

Can it generate examples?

Can it help students get unstuck faster?

It’s a reasonable question. It’s also the wrong one — or at least, it turned out to be the wrong starting point.

I noticed the shift when I was revising an assignment I’ve used for years. I caught myself thinking less about what the tool could produce and more about what I was actually asking students to practice.

Not complete.

Not submit.

Practice.

That’s when the question changed.

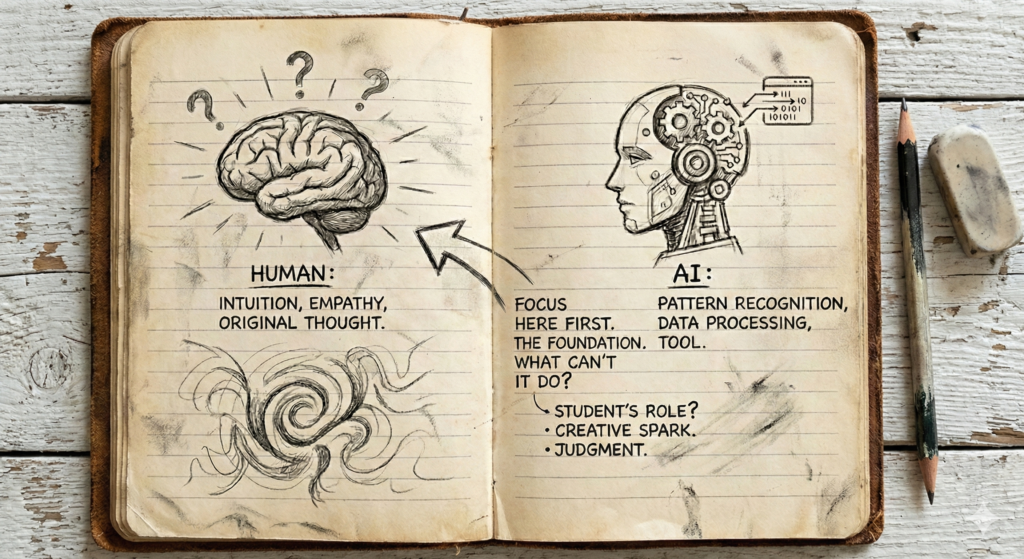

Instead of Can AI do this? I started asking things like:

What part of this work matters most when it comes to what students need to learn?

Where does human judgment (in the form of an educator) show up?

What would students lose if the tool quietly took control of what was being created or analyzed?

Those questions slowed everything down. In a good way.

The “can it?” question is technical.

It’s about capability.

It assumes that if something is possible, the next step is to decide how to allow it.

But teaching decisions don’t work like that. They’re less about permission and more about intention.

Once I stopped leading with capability, I started seeing how often AI flattens distinctions that matter in education. For example:

- It can generate text, but it can’t tell when an idea is half-formed in a productive way.

- It can offer structure, but it can’t see when confusion is actually part of learning.

- It doesn’t know which parts of an assignment are meant to be efficient and which parts are meant to be uncomfortable.

That distinction — the difference between helpful and appropriate — doesn’t show up in a prompt.

What surprised me most was how much clearer my own thinking became once I stopped outsourcing the first question.

I wasn’t deciding whether to “allow AI” or “ban AI.” I was deciding what kind of thinking I wanted to protect, what kind I wanted to support, and what kind I was willing to let go.

Sometimes the answer was: Sure, use the tool.

Sometimes it was: Not here. Not yet.

And sometimes it was: I need to rethink this assignment entirely.

None of those answers came from the technology itself.

They came from slowing down long enough to notice what the work was really doing.

I still ask what AI can do. That question hasn’t disappeared. It just comes later now — after I’ve clarified what matters, what’s fragile, and what I’m responsible for as the person designing the learning experience.

That shift hasn’t made things simpler. But it has made the decisions feel more honest.

And that, it turns out, is a much better place to start.

I share more of this kind of thinking in my newsletter, Draw Me a Map.